The landscape of data science is undergoing a profound transformation, shifting from isolated, manual processes toward integrated, automated, and collaborative ecosystems. At the heart of this evolution lies the convergence of Automated Machine Learning, or AutoML, with sophisticated collaboration platforms. This synergy is not merely a technological trend but a fundamental reimagining of how organizations derive value from data, democratizing advanced analytics and fostering a culture of shared insight and iterative innovation.

AutoML represents a paradigm shift in how machine learning models are built and deployed. Traditionally, developing a robust ML model required a rare combination of specialized skills in statistics, programming, and domain expertise. Data scientists would spend weeks or months on tedious, repetitive tasks: data cleaning, feature engineering, algorithm selection, hyperparameter tuning, and model validation. This process was not only time-consuming but also created a significant bottleneck, limiting the scale and speed at which businesses could operate analytically. AutoML platforms attack this very problem by automating these repetitive and complex steps. Using techniques like Bayesian optimization, evolutionary algorithms, and meta-learning, these systems can automatically explore thousands of possible model configurations, identifying the most accurate and efficient one for a given dataset and problem statement. This automation drastically reduces the time-to-insight from months to days or even hours, freeing data scientists to focus on more strategic tasks like problem framing, interpreting results, and ensuring ethical AI practices.

However, the power of automation reaches its full potential only when coupled with effective collaboration. Data science is inherently a team sport. A single project might involve data engineers curating pipelines, data scientists building models, ML engineers deploying them, business analysts interpreting outputs, and domain experts providing crucial context. Without a centralized platform, this workflow devolves into chaos—a tangled mess of version conflicts, scattered code notebooks, inconsistent environments, and broken communication channels. This is where modern data science collaboration platforms come into play. These platforms act as a unified workspace, providing version control for code and models, shared compute environments to ensure reproducibility, and tools for documenting experiments and sharing findings. They become the single source of truth for a project, ensuring that every team member, regardless of their technical proficiency, is aligned and can contribute effectively.

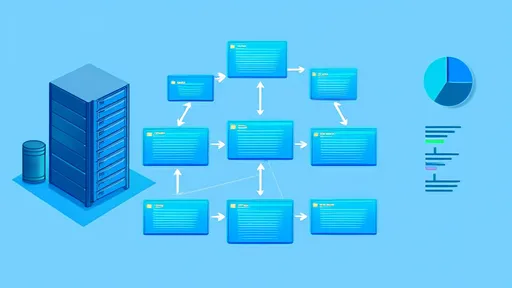

The true magic happens when AutoML is seamlessly embedded within these collaborative environments. Imagine a platform where a data engineer can preprocess and upload a dataset. A business analyst, with no coding experience, can then use a intuitive, drag-and-drop AutoML interface to initiate a model training process. The platform automatically tracks every experiment, logging all parameters, metrics, and resulting artifacts. The trained model can be reviewed and validated by a senior data scientist through a shared dashboard, who might approve it for deployment. An ML engineer can then, with a single click, deploy the approved model as a REST API endpoint, all within the same environment. This end-to-end integration creates a frictionless workflow that breaks down silos, accelerates the model lifecycle, and ensures that the final output is a product of collective intelligence and rigorous, transparent process.

The implications for businesses are substantial. This combined approach significantly lowers the barrier to entry for practicing data science. Citizen data scientists—professionals with domain knowledge but limited coding skills—can now actively participate in building predictive models, thereby vastly expanding the organization's analytical capacity. It also enhances the reproducibility and auditability of ML work. Every decision, from data version to final model parameters, is logged and traceable, which is critical for debugging, compliance, and governance in regulated industries. Furthermore, it optimizes resource allocation. By automating the tedious work, highly-paid data scientists and engineers can dedicate their time to solving more complex, novel problems that truly require human ingenuity, thus maximizing return on investment in talent and technology.

Despite its promise, the journey toward fully automated and collaborative data science is not without its challenges. There is a valid concern that over-reliance on AutoML could lead to a "black box" problem, where teams may struggle to understand why a model makes a certain prediction, eroding trust and potentially leading to unethical outcomes. The most advanced platforms are countering this by baking in explainable AI (XAI) features, providing clarity into model behavior. Another challenge is cultural resistance; fostering a collaborative mindset requires breaking down long-established departmental silos and encouraging a shared ownership of data-driven outcomes. Success, therefore, depends as much on change management and training as it does on technology selection.

Looking ahead, the fusion of AutoML and collaboration platforms is set to become the default operating model for modern data-driven enterprises. We can expect these platforms to become even more intelligent, perhaps offering automated recommendations for data quality improvement or suggesting novel features based on past project successes. The line between developer, data scientist, and business user will continue to blur, creating a new class of collaborative problem-solvers. In this new era, competitive advantage will stem not from having a handful of elite data scientists, but from building a pervasive, efficient, and collaborative analytics engine powered by automation. This is the future of data science: not just automated, but universally accessible and profoundly collaborative.

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025

By /Aug 26, 2025